Last weekend, a creator called Purz live-streamed something that should make every infrastructure builder pay attention. He opened a terminal, told Claude Code to generate 50 ComfyUI images with specific samplers, LoRA configurations, and file-naming conventions—and then watched the agent do it. No node dragging. No GUI. Just natural language to generated output in minutes.

This isn’t a demo. It’s the new default for power users. And it breaks four assumptions that every digital asset management system was built on.

Part of our AI-Native DAM Architecture Guide

What Changed: The Terminal Replaced the Canvas

The shift is architectural, not incremental. Creative professionals are moving from manual “node dragging” in ComfyUI to agentic orchestration through tools like comfyui-mcp, VibeComfy, and Claude-Code-ComfyUI-Nodes. These bridges use the Model Context Protocol (MCP) to give AI agents direct control over ComfyUI’s workflow engine.

The comfyui-mcp server alone exposes 31 tools and 10 slash commands. An agent can discover installed models, build workflow JSON, queue generations, manage VRAM, and debug failures—all without a human touching the interface. VibeComfy adds CLI-level workflow parsing so Claude can understand and refactor complex node graphs that would confuse a vanilla language model.

This isn’t limited to ComfyUI. MCP servers now exist for InvokeAI, for Midjourney via the AceDataCloud API, and for the Stable Diffusion WebUI (Automatic1111/ForgeUI). The pattern is converging: a human sets creative direction, an agent handles execution.

Four Things This Breaks

1. Folder-Based Organization Is Dead

When a human generates images one at a time, folders work. You create a project directory, name your files, and move on. When an agent batch-generates 200 variations in a session, folders become meaningless containers. The meaningful unit of organization is no longer a directory—it’s the creative session: a temporal cluster of generations sharing intent, parameters, and iterative context.

We built session-based clustering into Numonic specifically because agentic workflows demand temporal organization, not spatial filing.

2. Metadata Provenance Becomes Non-Optional

The agent knows exactly which LoRA, which seed, which sampler, and which prompt version it used. But that context lives in ephemeral terminal output unless your storage layer captures it structurally. JSON manifests generated alongside images help, but they’re fragile—tied to a specific agent’s local environment and easily separated from the assets they describe.

The IPTC released version 2025.1 of its Photo Metadata Standard with four new fields for the agentic era: AI System Used, AI System Version, AI Prompt Information, and AI Prompt Writer Name. These are a start, but they don’t capture the reasoning chain—the series of internal decisions and sub-agent calls that led to a specific creative output.

There is a desperate need for a Universal Context Connector that can map an agent’s reasoning directly to the permanent record of the digital asset.

— SDG Group, Metadata and Agentic AI Report, 20263. The Filename Hygiene Problem Scales Vertically

Purz makes this point explicitly in his stream: Claude is better at naming files than humans. True. But naming files is a band-aid over a structural problem. What you need isn’t better filenames but a queryable provenance layer. In Numonic, the search grammar query model_name:dreamshaper model_type:lora created:<2026-03-15 returns every asset generated with a specific LoRA before a given date. That’s three structured clauses, resolved in milliseconds. Try that with filenames.

4. MCP Is the Integration Protocol

The same Model Context Protocol that lets Claude control ComfyUI can let it store, search, and curate the output in a DAM. One agent, full lifecycle: generate, store, organize, retrieve. The Numonic MCP server already exposes tools for asset search, collection management, and publication—the same protocol surface that comfyui-mcp uses for generation.

This is the pattern that we wrote about in February: MCP as the universal connector between creative engines and asset infrastructure. The agentic workflow makes this integration path not just convenient but necessary.

Who Else Is Moving: The Market Landscape

The shift isn’t happening in a vacuum. Adobe and NVIDIA announced a strategic partnership at GTC 2026 focused on “agentic creative and marketing workflows,” integrating NVIDIA’s Agent Toolkit into the Firefly ecosystem. The BBC, NBCUniversal, ITV, RAI, and Disney’s ETC are collaborating on the FRAMES project at IBC 2026—using agents to automate pre-production across massive media archives.

Legacy DAMs like Bynder and Aprimo have rebranded as “Agentic DAMs,” but their agents operate as internal task runners for auto-tagging and resizing—walled gardens that don’t communicate with the broader ecosystem of Claude Code skills or ComfyUI nodes. The infrastructure gap isn’t auto-tagging. It’s ingesting the raw telemetry of an external agentic workflow as a primary artifact of the asset’s provenance.

The Compliance Dimension: August 2026

Article 50 of the EU AI Act introduces transparency obligations that become legally enforceable in August 2026. AI-generated content must be marked in a machine-readable format. Any content that “realistically depicts persons, objects, places, or events” requires explicit labeling at the time of first interaction.

For agentic workflows that generate content in batches, this creates a massive compliance burden. If an agent generates 1,000 images and publishes them automatically, each one must be detectable as artificially generated. The DAM becomes a compliance gateway—validating every asset against Article 50 requirements before it leaves the internal environment.

Most social platforms still strip C2PA metadata on upload. Adobe has responded with a “Cloud Record” system where provenance data is stored externally and retrieved even when file metadata is lost. For a Data Vault-first architecture, this “soft binding” pattern maps naturally to immutable satellite records that persist regardless of what happens to the file itself.

Why Data Vault 2.0 Was Built for This

I spent a decade governing systems that can’t afford to fail—energy grids serving 30 million Europeans, insurance platforms processing billions in claims. The architecture pattern those systems rely on is Data Vault 2.0, and it solves the agentic provenance problem by design:

- Immutability by design. Every metadata change creates a new satellite record. You can reconstruct the state of any asset at any point in time. For EU AI Act Article 12 compliance, this isn’t optional—it’s table stakes.

- Separation of concerns. Hubs store business keys (asset identity). Links capture relationships (which prompt created which image, which model version was used). Satellites hold the attributes that change over time. Provenance data never overwrites—it accumulates.

- Temporal queries. “Show me every asset generated with a specific LoRA before a given date.” With Data Vault, that query joins the model usage link to the asset hub and filters on load_datetime. With a traditional schema, it’s a nightmare. In Numonic, it’s a search grammar query that runs today.

We built Numonic on this architecture specifically because the volume and velocity of AI-generated content demands infrastructure that was designed for auditability, not retrofitted for it.

What’s Table Stakes and What’s Differentiating

The research is clear on where the industry is heading over the next 12 months:

| Capability | Status | Requirement |

|---|---|---|

| AI auto-tagging, semantic search | Table stakes | Index by concepts and intent, not just keywords |

| C2PA, IPTC 2025.1, XMP support | Table stakes | Native metadata interoperability |

| Agentic provenance capture | Differentiator | Capture agent reasoning and telemetry as part of the asset record |

| EU AI Act compliance automation | Differentiator | Real-time validation against Article 50 before publication |

| MCP/A2A connectivity | Differentiator | External agents can store, search, and curate via standardized protocols |

Key Takeaways

- Agentic creative workflows are production-ready. Tools like comfyui-mcp, VibeComfy, and Claude-Code-ComfyUI-Nodes give AI agents full orchestration control over generative AI engines.

- The asset management problem just scaled by an order of magnitude. When one person can generate hundreds of images from a terminal in minutes, folder-based organization, manual naming, and file-level metadata are no longer sufficient.

- Temporal clustering replaces spatial filing. The meaningful unit of organization is the creative session—a temporal cluster of generations sharing intent and parameters—not a directory path.

- MCP is the integration protocol. The same protocol that connects agents to creative engines should connect them to asset management. One agent, full lifecycle.

- Compliance becomes a DAM responsibility. With the EU AI Act Article 50 deadline in August 2026, the DAM must serve as a compliance gateway for agent-generated content.

- Immutable, temporal provenance is non-negotiable. Data Vault 2.0 provides the architectural foundation for capturing the full agentic lifecycle—not just the final output, but the reasoning that produced it.

How We Made This: Two Flavours of Agentic Creative Workflow

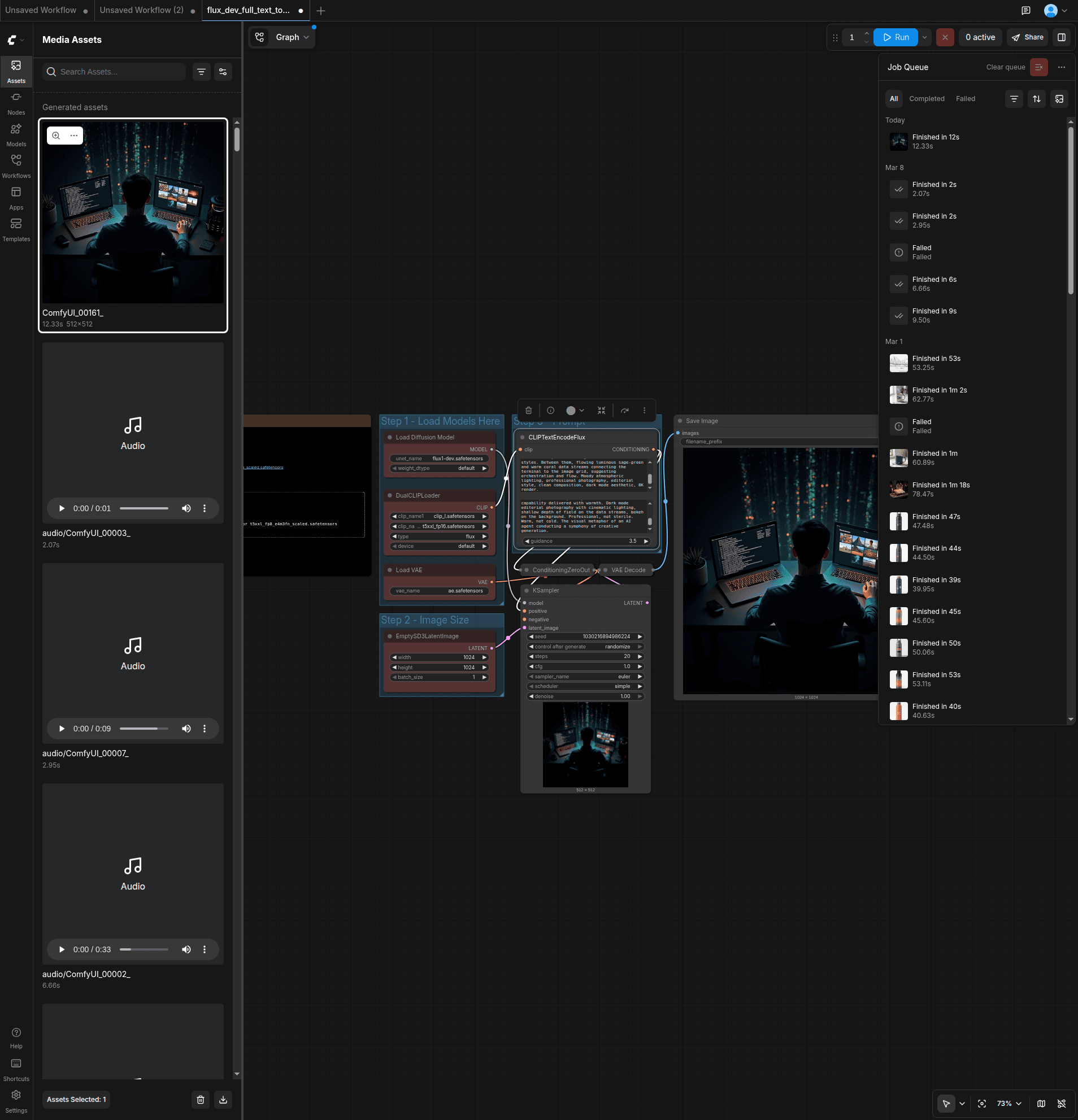

The hero image above was generated by Claude Code. Not by a human using ComfyUI—by the AI agent itself, driving a browser.

Purz’s approach in his live stream was headless MCP orchestration: Claude Code connects to ComfyUI’s API via the comfyui-mcp server, builds workflow JSON, and queues generations without ever opening a GUI. It’s fast, programmatic, and powerful.

Our approach for this article was different: browser-based agent orchestration. I logged into ComfyUI Cloud, then handed the browser to Claude Code via Playwright MCP. The agent navigated the templates, searched for Flux.1 Dev, typed the prompts (informed by Numonic’s brand palette and design system), clicked Run, and downloaded the result. The same GUI a human would use—driven by an agent.

Both approaches are valid patterns for the agentic creative era:

- Headless MCP is faster and more programmatic, ideal for batch workflows and CI/CD-style generation pipelines

- Browser-based Playwright is more accessible, requires no MCP server setup, and works with any cloud-hosted generative tool that has a web interface

After generation, we stored the images in Numonic and published them through our collections infrastructure. The full pipeline—generate in ComfyUI, store in Numonic, publish via collections, embed in the blog—is the dogfooding loop closing in real time. The headless MCP integration is next on the roadmap.