Casey (CEO) and I (CTO) recorded a conversation about a project we've been turning over for a while: what would it actually take to stage a meaningful slice of the Shakespeare canon using AI video and a small standing troupe of synthetic actors?

It started as a thought experiment. We've now moved it into territory where we're running real experiments. We're not committing to all 39 plays as feature-length films — that's the wrong shape of promise to make at this stage. What we are committing to is running this as a public R&D programme with agile intent: the videos we make about the process, posts like this one, the published collections inside Numonic, and the downstream YouTube releases. The codebase stays in our private GitLab. What we publish externally, we publish deliberately.

The Right Question Isn't “Can the Model Render It”

When teams pick up an AI-video stack and aim it at something ambitious, they tend to hit a wall around piece four or five: not because the renderer fails, but because they can't remember what they made on piece one. Same character, different face. Same set, different walls. Same wardrobe, different fabric. The output reads like four different productions stitched together. The space is moving fast and there's serious multi-minute work shipping every week — but the parts that don't show up in the highlight reel are exactly the parts that don't survive scaling: continuity, identity, asset retrieval, the editor's sanity at piece forty.

This isn't an AI-only problem. It's the same problem any studio solves on a real production: an editor needs the second take from the third day on the C-camera; a PA needs to know whether the wardrobe in scene 12 matches scene 47. Every studio that scales past student-film volume builds — or buys — a memory system for it. EDLs, on-set continuity logs, finishing-house archives, asset libraries. Studio memory is the part that doesn't make the highlight reel.

Studio memory is the part that doesn’t make the highlight reel. When AI compresses the production timeline, the memory problem arrives faster, not slower.

When AI compresses the production timeline, the studio-memory problem doesn't go away. It arrives faster and louder. By the time a small team has ten usable minutes, they are already drowning in candidate takes, character variants, prompt revisions, and conditioning images they will never find again.

So the right question isn't can the model render Shakespeare. It's can you remember what you rendered yesterday well enough to render today with it. That is the problem Numonic is built to solve, and Shakespeare is the test case.

What Numonic Is, in Studio Terms

Numonic is a persistent domain memory layer for creative production. Not a context-window cache for an LLM. A domain memory: a queryable, governed substrate where every asset — the take, the still, the LoRA, the prompt, the conditioning image, the final cut — lives with provenance, with relationships to the other assets it came from or fed into, and with the production-side metadata an editor or supervisor would actually look for.

The studio analogue is a finishing-house archive that an automated agent can read and write, and that a human supervisor can audit at any point. The thing that lets a real studio scale is that the editor and the PA both trust the archive. We're aiming for the same thing, with agents handling more of the editorial functions over time, and humans staying in the loop for the calls that still need taste. Our ambition is to serve customers running this kind of operation at scale — and the Shakespeare project is how we close the gap between that vision and production-grade.

The Meta-Flow

The loop we're building toward, drawn at the level a small studio could replicate:

From archetype brief to locked identity

Each arrow is a place where a human currently sits, an agent currently sits, or both. The work is to keep moving the boundary toward agents, deliberately, while documenting which calls stayed with humans and why.

Work Package 0 — Set Up the Room

Before any rendering happens, you need somewhere for the renders to land that doesn't immediately turn into a swamp. For us that meant three small artefacts:

- A cohort collection with four archetype sub-collections, one per pillar actor.

- A small tag taxonomy — actor identity, asset kind (hero / reference), identity-roll number, model used, a

chosen:trueflag. About a dozen tag families total. - An asset-description template so every ingested image carries a standardised metadata block (subject, framing, wardrobe, location, prompt seed) rather than free-form chaos.

That's it. A couple of hours, mostly authoring. We're flagging this because if you run a production company and you're contemplating something similar, the entry cost is low — the durable asset is the taxonomy + collection structure, and both are short documents. The point of work package 0 isn't the documents; it's that they exist before the first render lands.

Work Package 1 — Casting

The first real production work package was casting a four-actor pillar troupe. Each actor an archetype with a role portfolio across the canon, a locked signature monologue, and a locked cameo. Pre-fame imagery only — we want them to read as emerging artists at the start of a career arc, not stars at the end of one.

Each archetype's render graph emitted four hero stills + a vision-derived character bible + a 25-prompt prompt pack. We dispatched three rolls per archetype, ingested the bytes, enriched the metadata, then sat down and picked one roll per archetype.

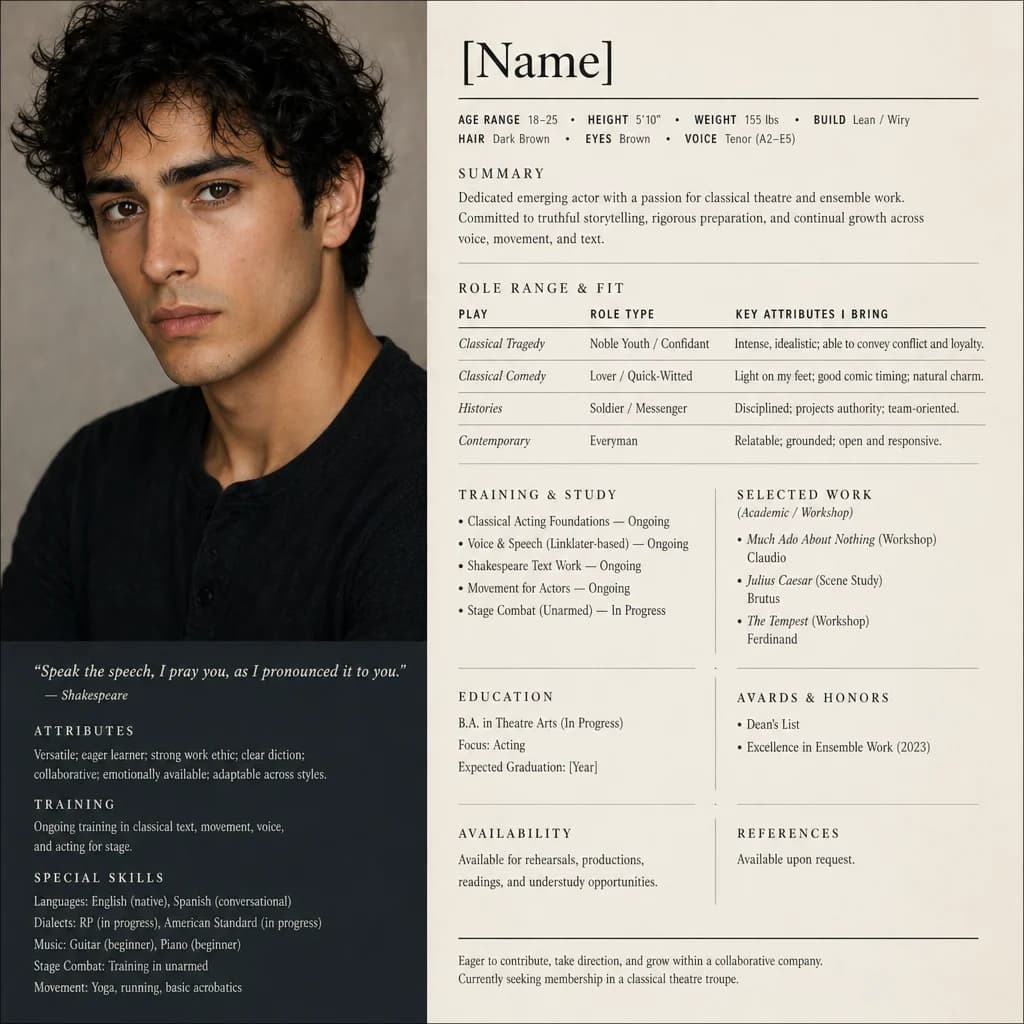

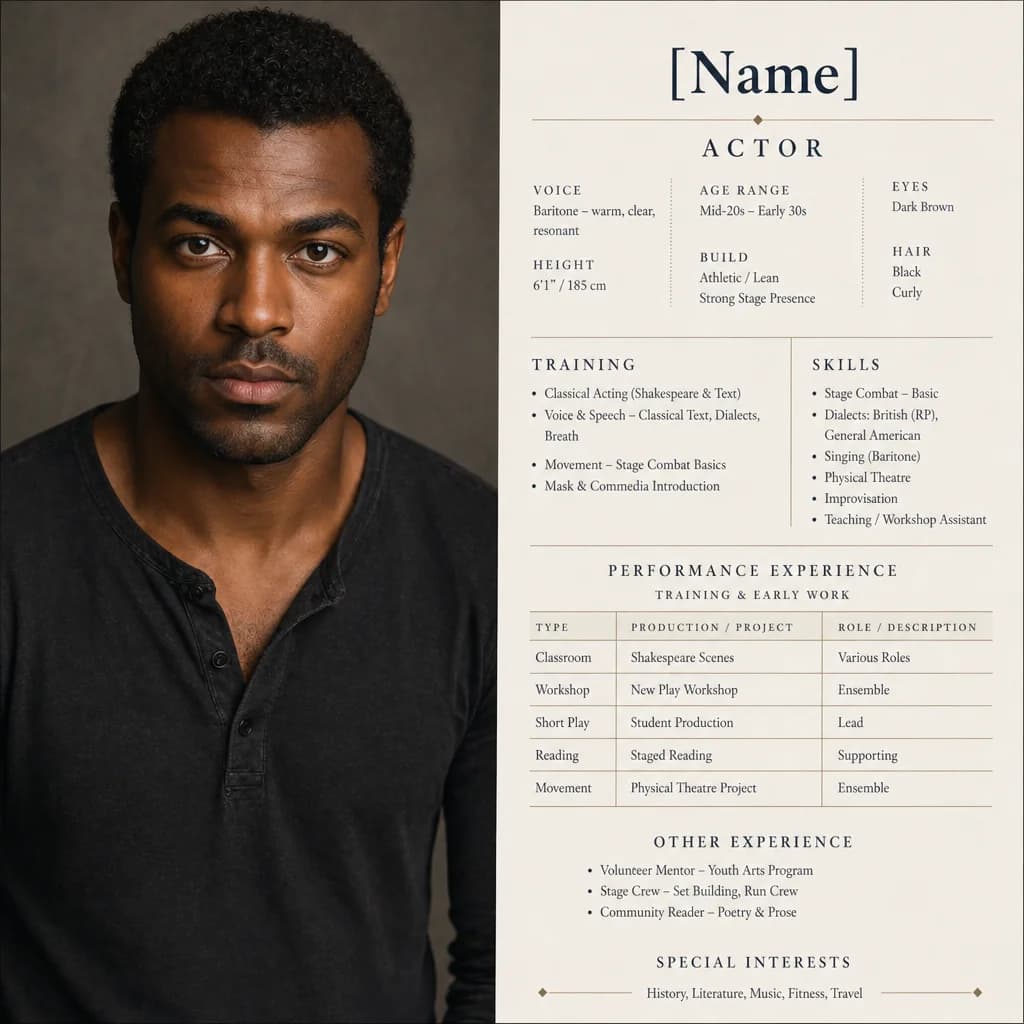

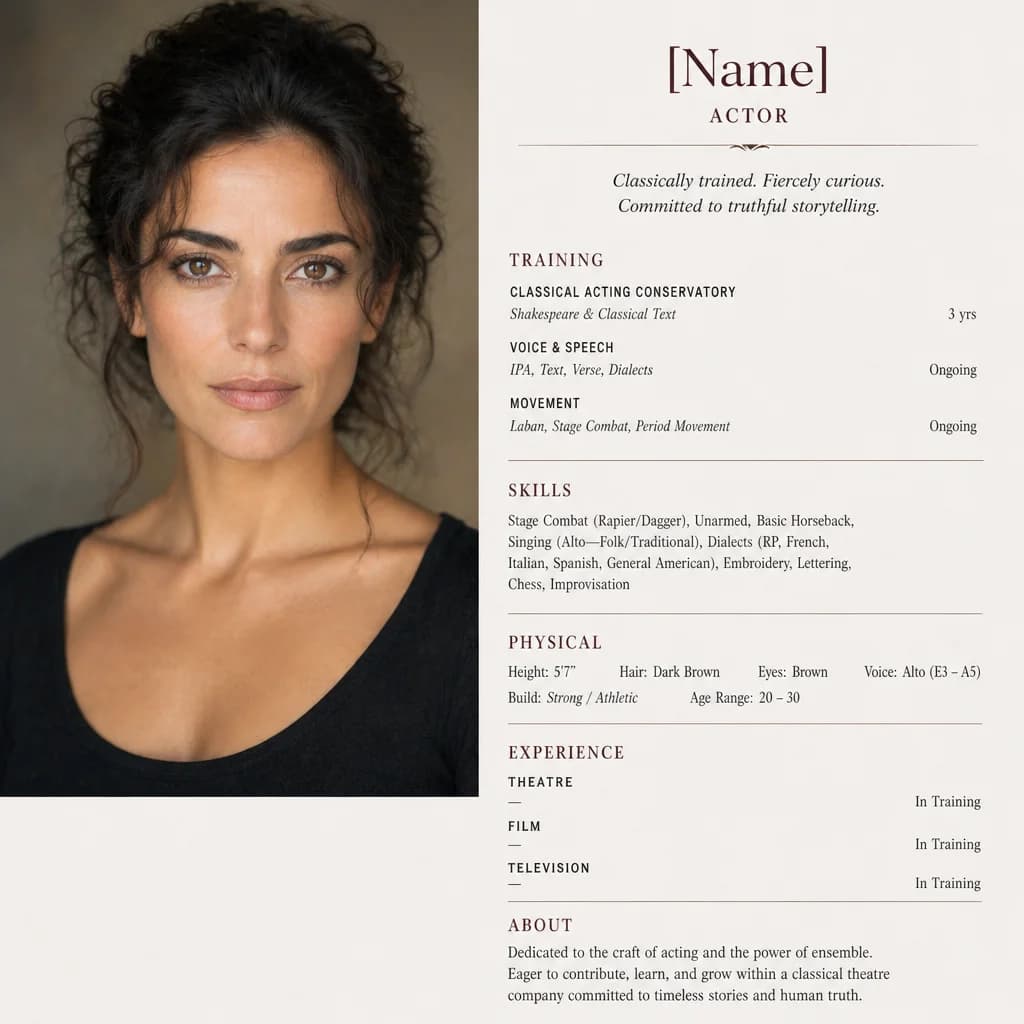

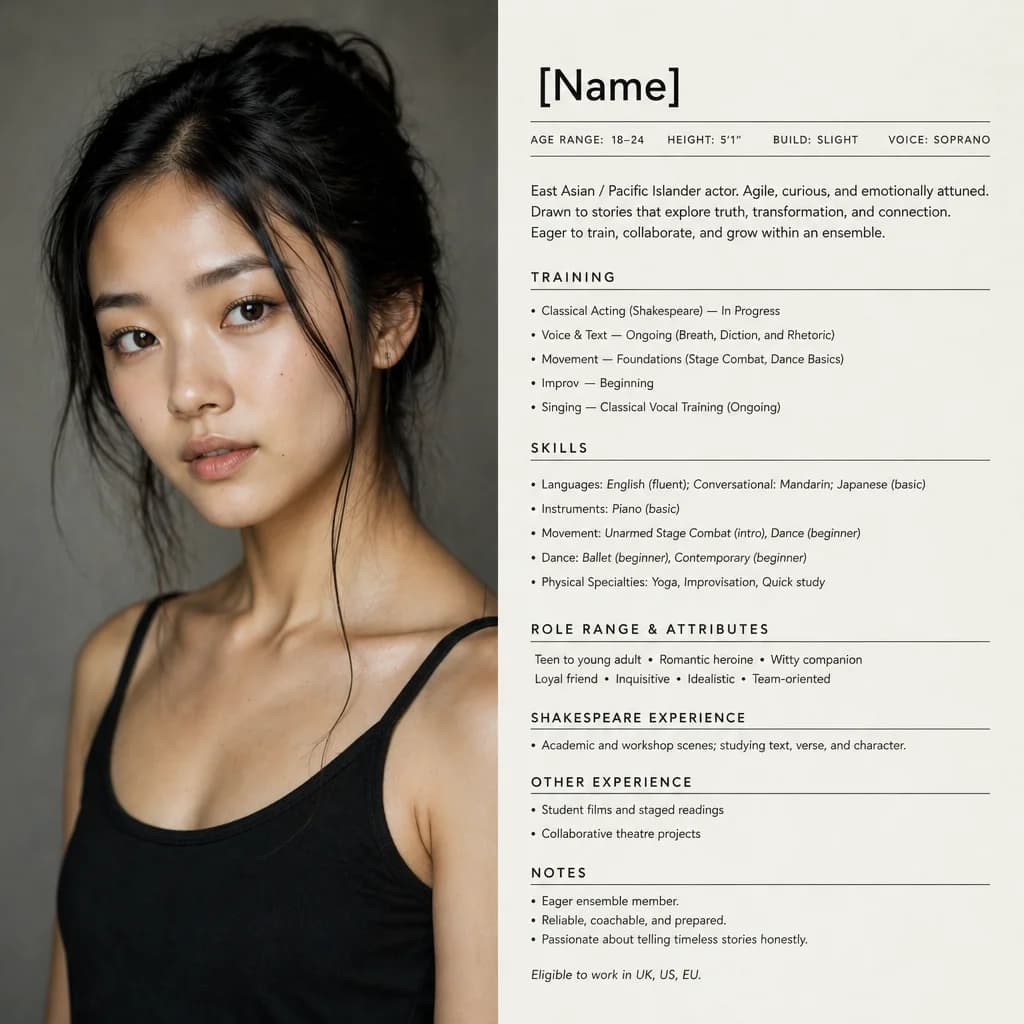

Here are the four.

Actor A — Nico Alvarez

Twenty-two, San Diego. Latinx / Mediterranean. Romantic-intellectual register; star-lead capable.

“He carries the romantic-intellectual weight without the canonical white-Hamlet gloss. We slated him with To be, or not to be and the camera leaned in. Hamlet, Henry V, Romeo, Othello, Orsino — same actor, all the way down.”

Actor B — Darren Cole

Mid-forties, London-based. Natural contemporary English; RP-capable when the scene asks. Danger / authority / power.

“Natural London is the room; he can land RP when the scene needs it. He read Tomorrow, and tomorrow and the tempo problem solved itself — the future-tense exhaustion is already in the face. Macbeth, Richard III, Prospero, Claudius, Falstaff.”

Actor C — Elena Marquez

Thirties, Seville. Light Andalusian-Spanish; can shift to Neutral American on cue. Tragic queenship + moral pressure.

“She gave us Out, damned spot and the moral weather changed without effort. Lady Macbeth, Desdemona, Queen Margaret, Gertrude, Lady Anne — the register is locked from the first read.”

Actor D — Mina Takahashi

Eighteen to twenty-four, Seattle. East Asian, Japanese-American. Romantic-comic-transformational.

“Read her against Gallop apace and the ceiling lifted. Juliet, Rosalind, Viola, Miranda, young Queen Elizabeth — all of them live in the same actor. Same face, multiple lives.”

That's the troupe. Identity is locked at four points in casting space; everything we make next conditions on these.

Six Things Broke (Which Is the Whole Point)

Across twelve dispatches we logged six discrete failures worth naming. PoCs are surface-area generators; this one generated:

- Filename collisions in bulk ingest — the watcher deduped by filename rather than content, and roughly twenty-six files were lost to a flat namespace before we re-pointed to a content-addressed staging path. Resolved at platform level by URL-based ingest, which has no filename namespace at all.

- One vision call returned literally the string “FAILED TO OUTPUT STRING” — the imagery survived; the bible didn't. The parser refused to validate, which is how we caught it. This is the kind of failure that needs a typed handler, not an exception block.

- One bible mis-attributed a monologue — Polonius's advice to Laertes instead of the locked Hamlet 3.1 soliloquy. Image identity was still usable; the textual anchor wasn't. Monologue-lock checking now sits in the parser.

- One bible left

ethnicityblank while the imagery clearly carried a specific identity. Soft-fail, surfaced for review, did not block the cohort. - Edit-gate friction on metadata curation — only the original uploader could edit asset metadata. Replaced with a tenant-member edit gate, with a database test suite enforcing the new rule.

- One image landed via a recovery path rather than the canonical ingest — its tag set survived, but cohort-specific tags didn't apply cleanly. Curation was intact; cohort retag is a one-line follow-up.

Six failures across the surface area of one casting cohort. This isn't a negative result. It's the exact kind of evidence we needed before we wire any of this into a customer-facing workflow.

Where Humans Currently Lead (and Where That Boundary Is Being Tested)

Sitting in the founders' chairs across this work, we made a number of judgement calls: defining the four archetypes; locking the signature monologues; setting demographic constraints (Actor A specifically not white and not Black, supporting our diversity initiative); approving the per-archetype budget cap; signing off on the closure of the work package.

The conventional wisdom is that some of these are inherently human: taste, money, policy, sign-off. We're not so sure. An accounting agent could plausibly own a budget cap inside an envelope set by humans. An archetype-design agent could draft demographic and role-portfolio briefs for human ratification. The sign-off itself is a workflow that could be issued, attested to, and audited under an agent identity.

We're calling these experimental territory — places where humans currently lead. We're not committing in advance to leaving them there. Part of running this in public is finding out, with evidence, which of those decisions can be agentified responsibly and which can't, and what the right governance pattern is for the ones that can.

What's Next

The very next work package is reference frames: condition each archetype on its locked identity and render reference stills for the canonical scenes and locations the troupe will need across the picked plays. Each frame becomes an identity-anchored conditioning image for the video work that follows.

If you want the wider arc — how the agent roles compose, where the persistent identity entity and the visual embedding pipeline fit — Casey and I cover it on the YouTube interview.

If you run a new-media studio and you're looking at the same scaling problem from the other side, we'd genuinely like to hear from you. The interesting thing about studio memory is that it gets harder for everyone the moment AI comes into the pipeline; the more honest the conversation about what's actually working, the faster the whole space moves.

— Jesse (CTO) & Casey (CEO), Numonic Labs

Watch the CEO/CTO Conversation

A 30-minute conversation between Casey and Jesse on the Shakespeare project, the Synthetic Studio Showcase milestone, and where the human-agent boundary is being tested.

Watch on YouTube